Build vs. Buy: Why You Can’t Outsource Your Practice’s AI Brain

The 5% Survival Rate

This doesn't make sense, and here is why. A recent MIT study revealed a staggering statistic about enterprise technology: out of all the corporate AI pilots launched recently, only 5% actually produced measurable, scalable value. That means 95% of organizations are dumping massive amounts of capital into advanced AI systems, only to see absolutely zero shift in their productivity.

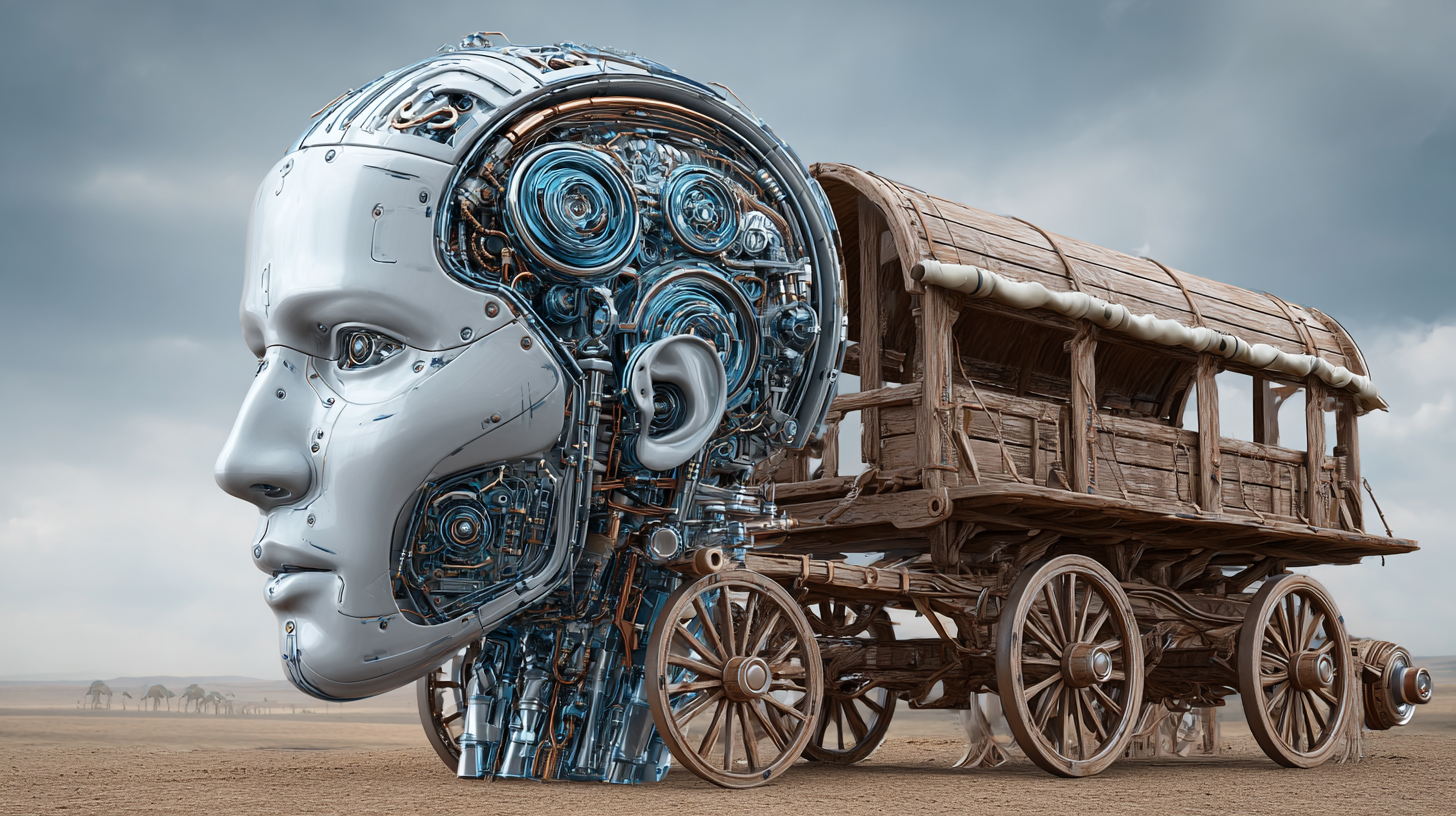

They are failing because they are treating AI like a tool instead of an architecture. They are taking incredibly advanced AI and layering it directly on top of legacy workflows that were designed decades ago to accommodate human limitations—like 9-to-5 hours, manual handoffs, and cognitive fatigue. It's exactly like strapping a modern combustion engine onto a wooden horse-drawn carriage. The vehicle is structurally incapable of handling the engine's output. The wheels shatter. The suspension breaks. You haven't built a car; you've just built a faster, more dangerous carriage.

When Tacit Knowledge Stays Locked in Your Head

When I was a resident, I worked 144-hour weeks. The healthcare system used us as a workforce labor force. I was the phlebotomist, the orderly, the transcriber, and the executive assistant to the attending—all at once. I learned early on that the most important clinical rules are never written down in an employee handbook. It's the tacit knowledge—the procedural muscle memory of knowing exactly how much buffer time a specific practitioner needs between complex appointments, or reading the emotional subtext of a patient who is struggling to articulate their symptoms.

The structural insight here is that if your tacit knowledge stays locked in your head, an AI agent cannot read your rules, and therefore it cannot execute your workflow safely. This is the fundamental flaw in the "Buy" model of AI. When you buy a generic, off-the-shelf SaaS product to run your patient intake or handle your CRM, you are buying a system that does not know your clinical boundaries. It doesn't know your escalation triggers. It doesn't know your neuroaesthetic standards. You cannot mathematically code a gut feeling about a patient's emotional state into a generic language model.

The 5 Worker Bees of the Agent OS

So here is what I am taking from this, and how we are building it at Ceyise Studios®. To make an AI system work in a highly regulated, high-trust environment, you have to fundamentally rethink the entire vehicle. You have to shift from a "tool-centric" model to an "Agent OS". In an Agent OS, you deploy a multi-agent framework where specialized AI agents collaborate on complex workflows. Think of them as the 5 worker bees of your practice:

The Assistant: Handles the direct, front-line interaction with the patient, providing a calming, responsive interface.

The Analyst: Synthesizes historical data, recognizes patterns in patient logs, and evaluates risk matrices.

The Tasker: Executes bounded, repetitive administrative actions like updating the CRM and triggering email sequences.

The Orchestrator: Manages the routing, ensuring data flows securely between the app, the engine, and the clinical medical record.

The Guardian: Monitors compliance, enforcing strict scope-of-action constraints and triggering human escalation when clinical boundaries are reached.

These agents don't replace human empathy; they aggressively protect the space where human empathy operates by handling the administrative friction.

Governance is the Bridge

My license could be on the line. I'm not interested in making things up, and I'm not interested in generic tech that hallucinates medical advice. To use AI safely as a physician extender, you must build it—or have it built for you—with your specific clinical IP grounded into the model's brain.

You have to externalize your internal knowledge. You must define the exact routing protocols, the explicit thresholds for human escalation, and the rigorous audit trails. You don't buy an AI brain; you architect an agentic workflow governed by your own rules. That is where I see AI agentics actually serving boutique practices, rather than breaking them.

References & Further Reading

MIT Project NANDA — The GenAI Divide: State of AI in Business 2025 Challapally, A., Pease, C., Raskar, R., & Chari, P. (July 2025). fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo

McKinsey & Company — The Agentic Organization: Contours of the Next Paradigm for the AI Era (September 2025) Covers the shift from tool-centric to agentic organizational models, governance requirements, and multi-agent frameworks. mckinsey.com/capabilities/people-and-organizational-performance/our-insights/the-agentic-organization-contours-of-the-next-paradigm-for-the-ai-era

Microsoft Open Source — Agent Governance Toolkit (April 2026) Documents the Agent OS concept as a policy engine for governing autonomous AI agents in regulated environments. opensource.microsoft.com/blog/2026/04/02/introducing-the-agent-governance-toolkit-open-source-runtime-security-for-ai-agents